Google Launches Gemini, an AI Model to Rival ChatGPT [Here's How to Access It]

Published on

ChatGPT sent shockwaves through the tech world when it launched in late 2022, showcasing advanced conversational abilities that captured public imagination. Seemingly caught off guard, Google has raced to respond, finally unveiling its own ChatGPT rival dubbed Gemini.

Gemini comes in three variants aimed at different applications. Gemini Nano runs natively on Google’s Pixel phones, while Gemini Pro powers the company’s Bard chatbot.

However, the most transformative version looks to be Gemini Ultra - an AI colossus intended for enterprise and data center deployment that Google claims outmatches GPT-4 across nearly all benchmark tests.

Google CEO Sundar Pichai heralded Gemini as ushering in “a new era of AI” at the company. Gemini builds upon extensive Google research into areas like multimodal learning and efficient neural architectures.

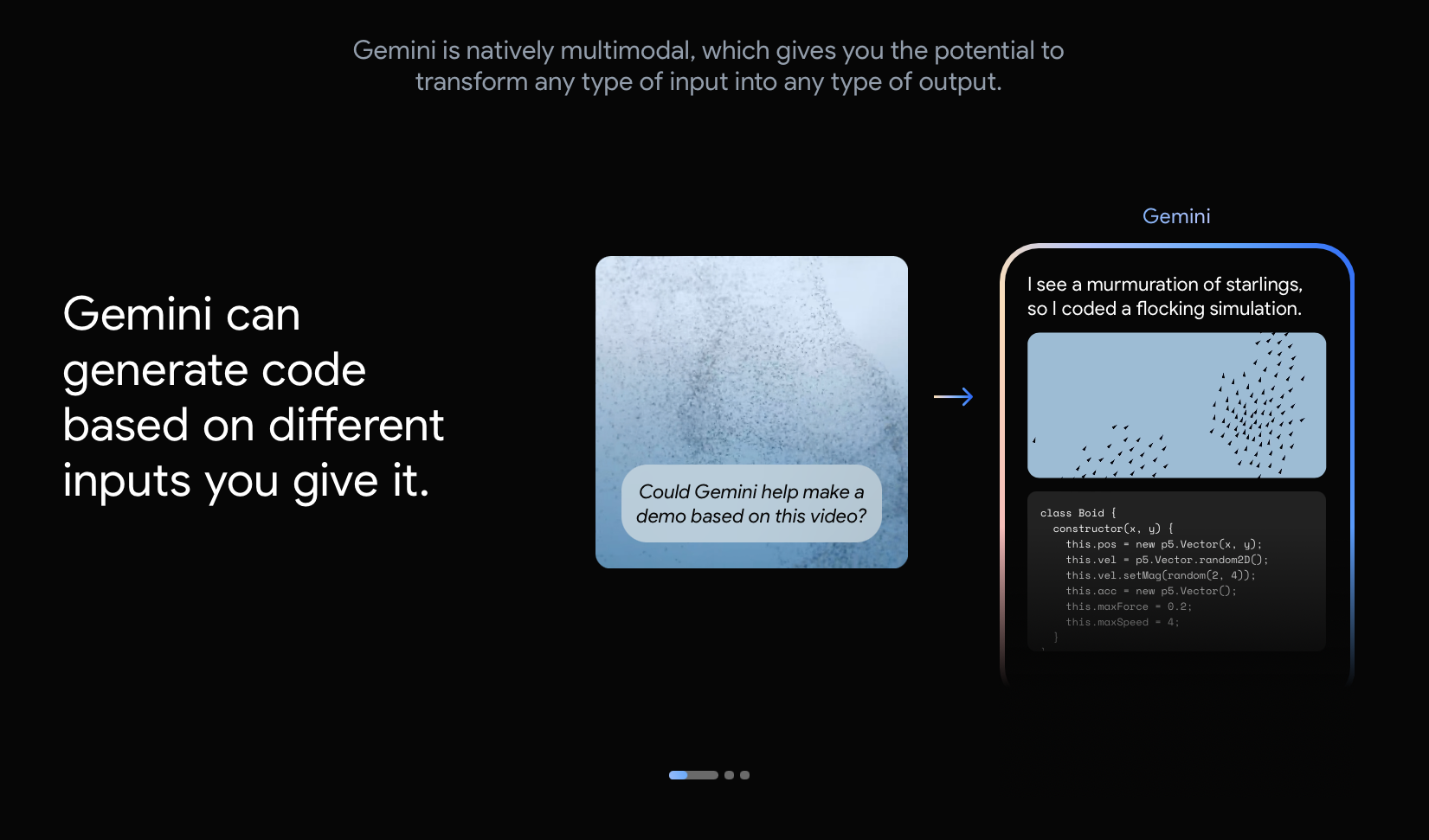

Multimodality could prove a key advantage over text-only systems like ChatGPT, with Gemini able to understand and generate images, audio and video alongside text.

Gemini vs ChatGPT

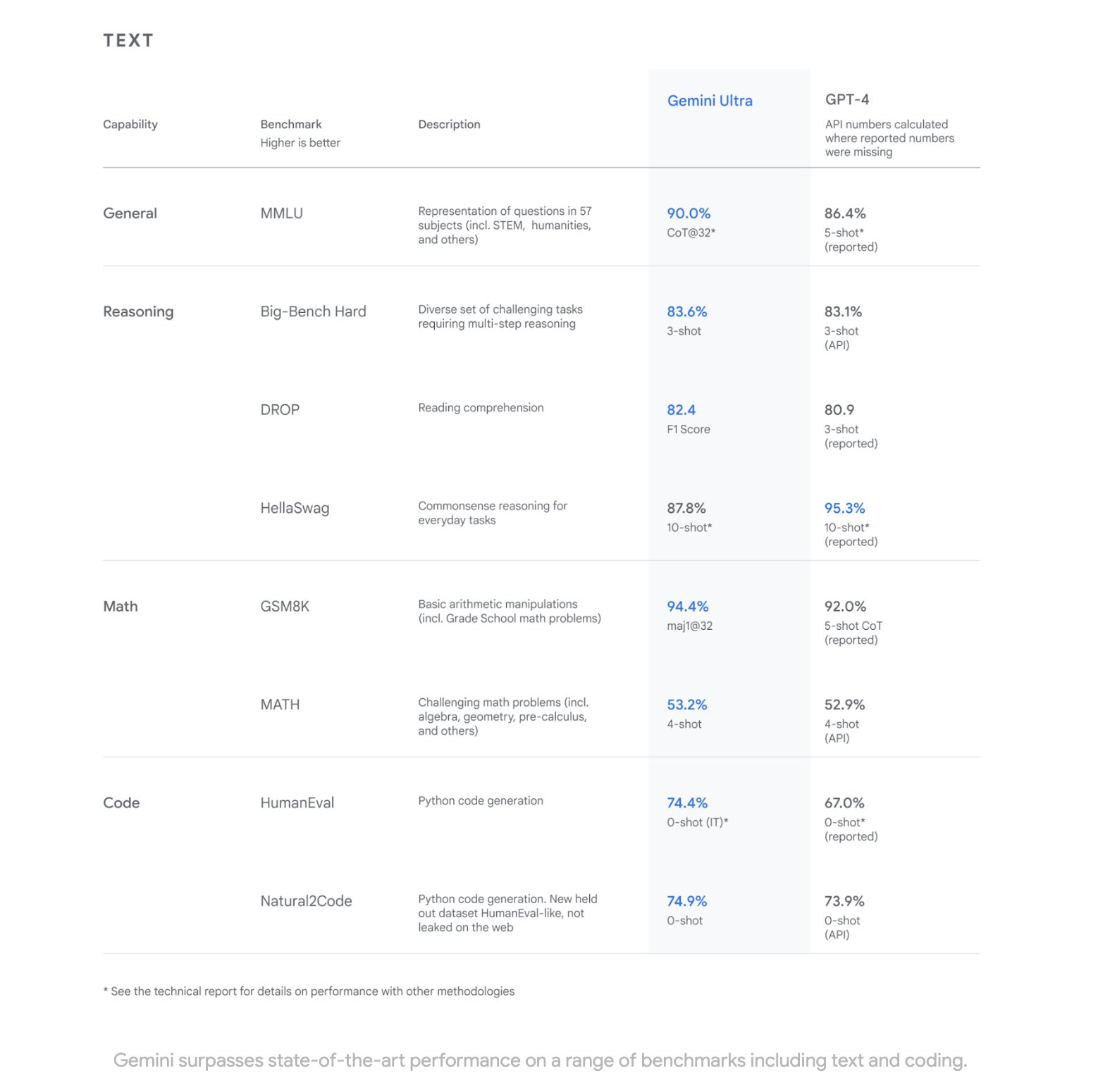

In controlled testing, Google asserts that Gemini Ultra dominated ChatGPT (GPT-4) across 30 out of 32 benchmarks used to assess large language models. These encompassed diverse metrics evaluating versatility, reasoning ability and coding skills.

Outperforming humans in specialized benchmarks, Gemini Ultra scored 90% in the Multi-task Language Understanding test - a first for an AI system, offering evidence of possible technological leadership.

Testing regimes themselves remain contested, however - scores can fluctuate drastically based on dataset and metric selections favoring one model over another.

Real-world usage tends to surface unpredictable model strengths and weaknesses less visible in controlled conditions.

Nonetheless, Google’s testing methodology aimed to be extensive. Spanning over 32 benchmarks, it suggests Gemini Ultra possessing decisive advantages - rather than marginal gains - over its OpenAI rival.

Diving into Gemini’s technical paper reveals some intriguing details under the hood.

- 32K token context length

- Efficient attention mechanisms (e.g. multi-query attention)

- Audio input processing via Universal Speech Model (USM)

- Visual encoding inspired by Google’s Flamingo, CoCa, and PaLI computer vision models

- Image generation using discrete image tokens

- Training via supervised fine-tuning (SFT)

- Training enhancement with reinforcement learning from human feedback (RLHF)

While currently text-focused, multimodality is central to Google’s vision for Gemini and differentiating focus. Google aims for Gemini to absorb more sensory inputs over time - interacting via touch, sound and more like humans themselves.

Foundational research projects at Google DeepMind like Chinchilla already demonstrate key building blocks, processing images and text to reason about the world.

Related benchmarks likewise highlight progress - Gemini Ultra can already generate code from visual information with techniques like unit testing.

Google’s TPU v5 chips tailor-made for AI acceleration will likely prove essential for advancing multimodal capacity. As with all neural networks, Gemini’s functionality scales extensively with data volume and compute resources allocated.

Gemini Powers a New Supercharged Coding Tool

Further showcasing its coding capabilities, Google has powered up AlphaCode into a new version called AlphaCode 2 to coincide with Gemini’s launch.

Building upon the original AlphaCode system that could competitively write programs against humans, AlphaCode 2 tackles more advanced challenges involving complex math and computer science concepts.

With its strengthened comprehension and reasoning abilities, Gemini takes AlphaCode 2 to new heights - achieving 85% proficiency relative to expert programmers.

How to Access Google’s Gemini?

Possibly learning from OpenAI’s own reckoning with ChatGPT’s public launch, Google seems to be taking an iterative approach with Gemini’s integration into products.

Despite proclaimed testing gains, only Gemini Pro is currently public-facing - It’s available inside of the Bard chatbot right now.

Developers and enterprise customers will be able to access Gemini Pro through Google Generative AI Studio or Vertex AI in Google Cloud starting on December 13th.

Additionally on the consumer front, the Gemini Nano version exclusive to Pixel phones unlocks on-device suggestions. Pixel 8 Pro owners can benefit from AI writing aids inside apps like Gboard keyboard and Recorder.

Though currently limited to text, these constitute early previews of Google’s ambition for an AI-assisted future across devices.

Gemini Ultra, won’t be released until 2024. Google states it will experiment further in closed environments first, cautiously scaling access over time.

Positioning Ultra for controlled enterprise adoption aligns with Google Cloud’s increasing prioritization. However slowly, integration elsewhere is expected - including core offerings like advertising, web search and browsers.

Far from finished, Gemini launched as a preview - missing some key attributes touted in its eventual specifications. But as the foundation for Google’s generative AI offerings in the coming years, its first appearance glimpses the beginning stages of a potential titan still coming into form.

With an apparent lead in benchmarks and long-term investment already set in motion, Gemini may one day spearhead a transformation sweeping across Google’s global ecosystem.